Notes from the Digital Humanities, Human Technologies / AHRC NWCDTP Postgraduate Conference 2018 / part 1

https://nwcdtpconference2018.wordpress.com/home/

John Merrill [MMU] presented his project; Portrait as Landscape: Rendering the Unseen surface of the face. He explained some ideas behind visual perception and the evolution of the eye, and its flaws which were a great introduction to the project. Merrill creates digitally processed high contrast, large-scale photographs. Sharpening and enhancing the surface topography and textures of the face. The idea is that attention is taken away from thinking about the character of the person pictured, and moving away from the stereotypes associated with how we recognise faces and make judgments about character. The idea is that we are left to think about the more visceral aesthetic details of texture and form and shape.

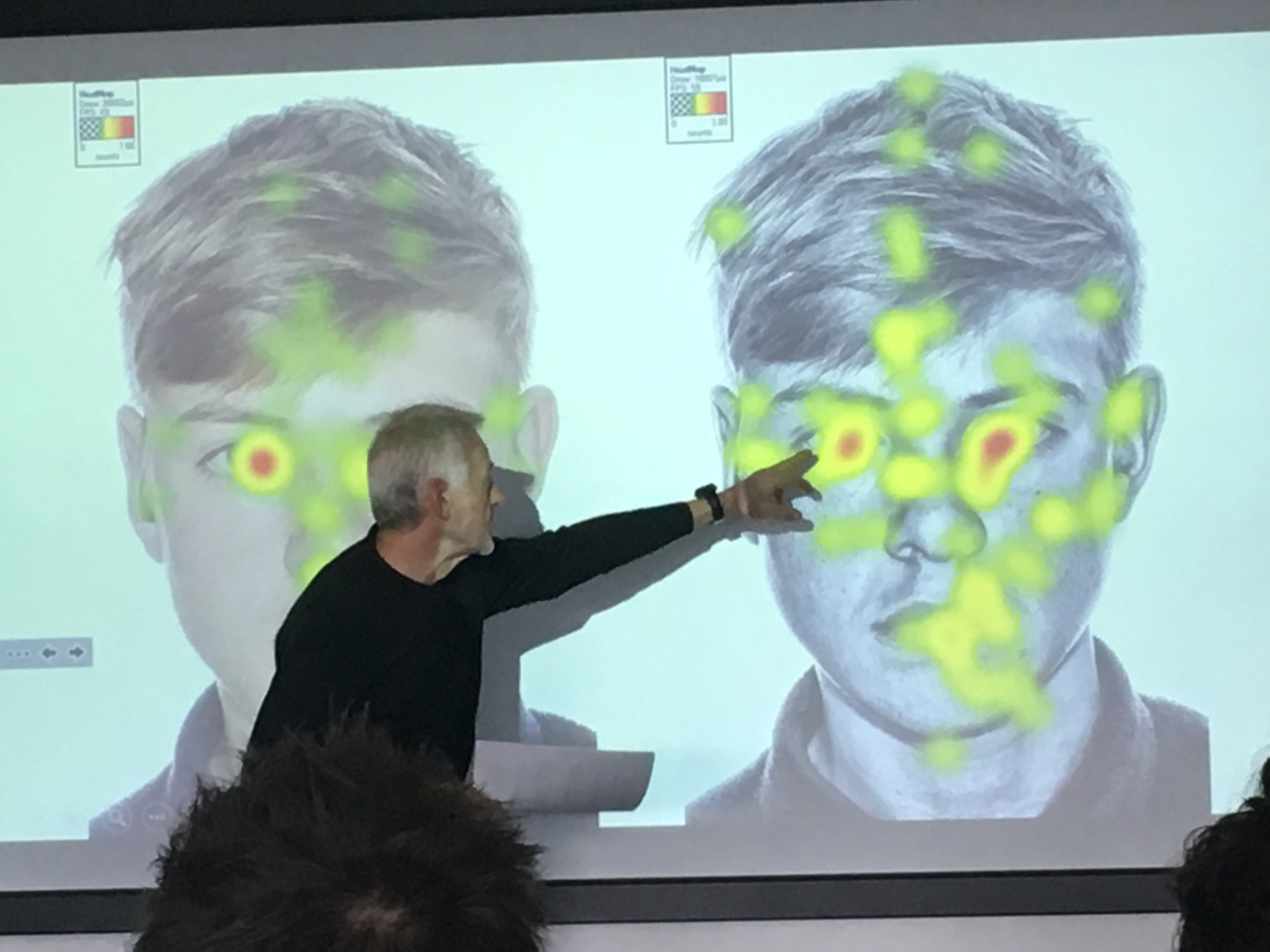

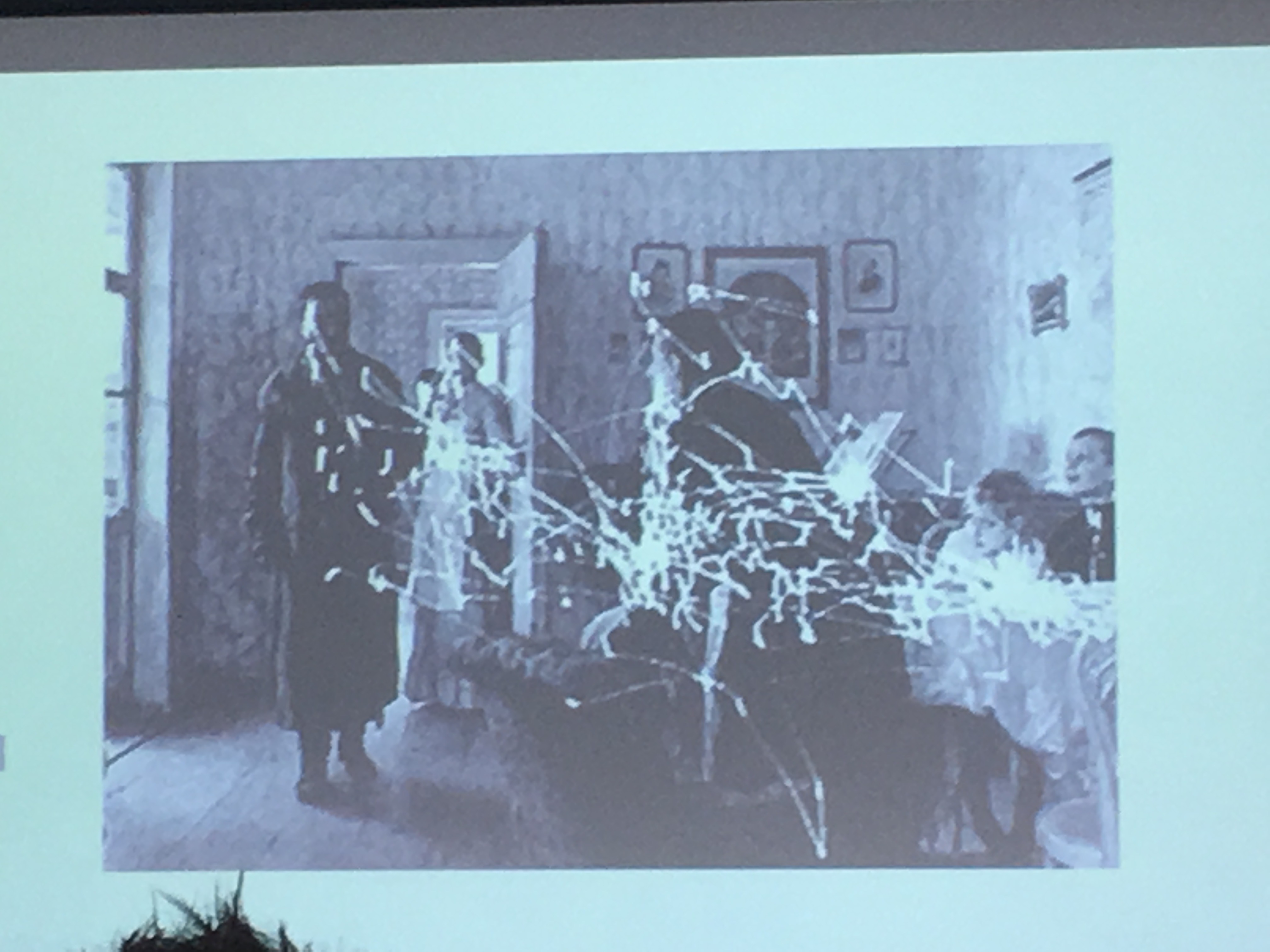

Dr Zofija Tupikovskaja-Omovie [MMU] presented her project; Eye Tracking Technology in Creative Practice research. She used a mobile eye tracking device to capture the visual gaze of an artist [writer and photographer] as she walked, making work in response to what she saw. This provided an amazing insight into how and why she selected images [visual and poetic] For example; An image of graffiti on a wall came about through her carefully investigating the social detritus, debris, rubbish, and weeds at the base of the wall, one might not have originally noticed these in the periphery of the image.

A flaw of eye tracking is that although it can track the central focus of the eye, this does not tell us really what is being looked at. Although I might look at your mouth while you speak, or your eyes, it’s your hands and arms that might be saying just as much, it’s possible to detect gestures and rhythms, an open palm or pointing finger, in the periphery. But if you tried focussing on these, trying to follow the fast movements might be distracting, leaving you unable to focus on the conversation. As referred to in Merriels talk, it is only the central region of the eye that detects colour, and it is many times more detailed than the peripheral region of our vision. In the peripheral region, we see less detail with no colour. But our peripheral sight is much better at detecting movements and change. Merrel went on to show some intriguing strangeness of the eyes flawed design. But rather than a flawed design, perhaps there is a reason behind this? Should the eye be considered not only as a flawed optical sensor but as an efficient perceptual filter, optimising specific focused details, trading off full resolution optical clarity for a more focused, specific useful environmental information? Night vision, for example, is vastly better in the periphery. I discovered this first hand through a recent experiment I participated in. While the central region is almost useless for navigating in low light.

I was able to use some of this training in relation to my extracurricular hobby of MTB racing. In cycling its wise to focus on the road ahead. Not directly ahead of the wheel. By not focussing on objects in the immediate collision line one must target a and plan a clear line ahead, and rely on the body to will the bike towards this point, trusting it to respond physiologically and proprioceptively to terrain under the bike. A more general visual sense of direction speed and immediate problem [collision objects] automatic and subconscious, instinctive decisions must be relied upon.

Just try riding your bike on the yellow line at the side of the road. It’s much easier if you look far ahead. Translate this into trying not to ride into a rut, or riding a narrow ledge between rocks at speed.

What we actually see with our eye is only a small part of the subjective perceptual image or environmental model we build inside our mind. This is not just based on what we see visually but instead is based on assumptions and expectations about the spaces and environments we have previously encountered. Our memories and knowledge of the environment can be used to predict and buffer and complement the visual.